kotaemon

An open-source clean & customizable RAG UI for chatting with your documents. Built with both end users and

developers in mind.

Live Demo |

Online Install |

User Guide |

Developer Guide |

Feedback |

Contact

Introduction

This project serves as a functional RAG UI for both end users who want to do QA on their

documents and developers who want to build their own RAG pipeline.

For end users

- Clean & Minimalistic UI: A user-friendly interface for RAG-based QA.

- Support for Various LLMs: Compatible with LLM API providers (OpenAI, AzureOpenAI, Cohere, etc.) and local LLMs (via

ollamaandllama-cpp-python). - Easy Installation: Simple scripts to get you started quickly.

For developers

- Framework for RAG Pipelines: Tools to build your own RAG-based document QA pipeline.

- Customizable UI: See your RAG pipeline in action with the provided UI, built with Gradio

.

- Gradio Theme: If you use Gradio for development, check out our theme here: kotaemon-gradio-theme.

Key Features

-

Host your own document QA (RAG) web-UI: Support multi-user login, organize your files in private/public collections, collaborate and share your favorite chat with others.

-

Organize your LLM & Embedding models: Support both local LLMs & popular API providers (OpenAI, Azure, Ollama, Groq).

-

Hybrid RAG pipeline: Sane default RAG pipeline with hybrid (full-text & vector) retriever and re-ranking to ensure best retrieval quality.

-

Multi-modal QA support: Perform Question Answering on multiple documents with figures and tables support. Support multi-modal document parsing (selectable options on UI).

-

Advanced citations with document preview: By default the system will provide detailed citations to ensure the correctness of LLM answers. View your citations (incl. relevant score) directly in the in-browser PDF viewer with highlights. Warning when retrieval pipeline return low relevant articles.

-

Support complex reasoning methods: Use question decomposition to answer your complex/multi-hop question. Support agent-based reasoning with

ReAct,ReWOOand other agents. -

Configurable settings UI: You can adjust most important aspects of retrieval & generation process on the UI (incl. prompts).

-

Extensible: Being built on Gradio, you are free to customize or add any UI elements as you like. Also, we aim to support multiple strategies for document indexing & retrieval.

GraphRAGindexing pipeline is provided as an example.

Installation

If you are not a developer and just want to use the app, please check out our easy-to-follow User Guide. Download the

.zipfile from the latest release to get all the newest features and bug fixes.

System requirements

- Python >= 3.10

- Docker: optional, if you install with Docker

- Unstructured if you want to process files other than

.pdf,.html,.mhtml, and.xlsxdocuments. Installation steps differ depending on your operating system. Please visit the link and follow the specific instructions provided there.

With Docker (recommended)

-

We support both

lite&fullversion of Docker images. Withfull, the extra packages ofunstructuredwill be installed as well, it can support additional file types (.doc,.docx, ...) but the cost is larger docker image size. For most users, theliteimage should work well in most cases.- To use the

liteversion.

- To use the

fullversion.

- To use the

- We currently support and test two platforms:

linux/amd64andlinux/arm64(for newer Mac). You can specify the platform by passing--platformin thedocker runcommand. For example:

-

Once everything is set up correctly, you can go to

http://localhost:7860/to access the WebUI. -

We use GHCR to store docker images, all images can be found here.

Without Docker

- Clone and install required packages on a fresh python environment.

-

Create a

.envfile in the root of this project. Use.env.exampleas a templateThe

.envfile is there to serve use cases where users want to pre-config the models before starting up the app (e.g. deploy the app on HF hub). The file will only be used to populate the db once upon the first run, it will no longer be used in consequent runs. -

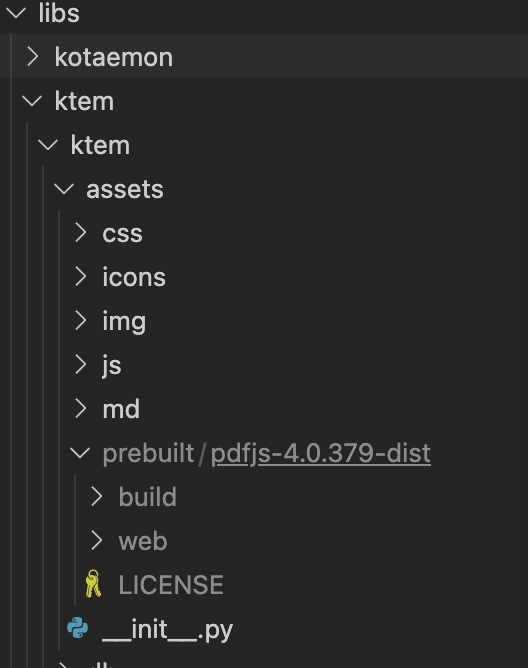

(Optional) To enable in-browser

PDF_JSviewer, download PDF_JS_DIST then extract it tolibs/ktem/ktem/assets/prebuilt

-

Start the web server:

- The app will be automatically launched in your browser.

- Default username and password are both

admin. You can set up additional users directly through the UI.

-

Check the

Resourcestab andLLMs and Embeddingsand ensure that yourapi_keyvalue is set correctly from your.envfile. If it is not set, you can set it there.

Setup GraphRAG

[!NOTE]

Currently GraphRAG feature only works with OpenAI or Ollama API.

- Non-Docker Installation: If you are not using Docker, install GraphRAG with the following command:

- Setting Up API KEY: To use the GraphRAG retriever feature, ensure you set the

GRAPHRAG_API_KEYenvironment variable. You can do this directly in your environment or by adding it to a.envfile. - Using Local Models and Custom Settings: If you want to use GraphRAG with local models (like

Ollama) or customize the default LLM and other configurations, set theUSE_CUSTOMIZED_GRAPHRAG_SETTINGenvironment variable to true. Then, adjust your settings in thesettings.yaml.examplefile.

Setup Local Models (for local/private RAG)

See Local model setup.

Customize your application

-

By default, all application data is stored in the

./ktem_app_datafolder. You can back up or copy this folder to transfer your installation to a new machine. -

For advanced users or specific use cases, you can customize these files:

flowsettings.py.env

flowsettings.py

This file contains the configuration of your application. You can use the example

here as the starting point.

Notable settings

# setup your preferred document store (with full-text search capabilities)

KH_DOCSTORE=(Elasticsearch | LanceDB | SimpleFileDocumentStore)

# setup your preferred vectorstore (for vector-based search)

KH_VECTORSTORE=(ChromaDB | LanceDB | InMemory | Qdrant)

# Enable / disable multimodal QA

KH_REASONINGS_USE_MULTIMODAL=True

# Setup your new reasoning pipeline or modify existing one.

KH_REASONINGS = [

"ktem.reasoning.simple.FullQAPipeline",

"ktem.reasoning.simple.FullDecomposeQAPipeline",

"ktem.reasoning.react.ReactAgentPipeline",

"ktem.reasoning.rewoo.RewooAgentPipeline",

]

.env

This file provides another way to configure your models and credentials.

Configure model via the .env file

-

Alternatively, you can configure the models via the

.envfile with the information needed to connect to the LLMs. This file is located in the folder of the application. If you don't see it, you can create one. -

Currently, the following providers are supported:

-

OpenAI

In the

.envfile, set theOPENAI_API_KEYvariable with your OpenAI API key in order

to enable access to OpenAI's models. There are other variables that can be modified,

please feel free to edit them to fit your case. Otherwise, the default parameter should

work for most people. -

Azure OpenAI

For OpenAI models via Azure platform, you need to provide your Azure endpoint and API

key. Your might also need to provide your developments' name for the chat model and the

embedding model depending on how you set up Azure development.

-

Local Models

-

Using

ollamaOpenAI compatible server:-

Install ollama and start the application.

-

Pull your model, for example:

-

Set the model names on web UI and make it as default:

-

-

Using

GGUFwithllama-cpp-pythonYou can search and download a LLM to be ran locally from the Hugging Face Hub. Currently, these model formats are supported:

-

GGUF

You should choose a model whose size is less than your device's memory and should leave

about 2 GB. For example, if you have 16 GB of RAM in total, of which 12 GB is available,

then you should choose a model that takes up at most 10 GB of RAM. Bigger models tend to

give better generation but also take more processing time.Here are some recommendations and their size in memory:

-

Qwen1.5-1.8B-Chat-GGUF: around 2 GB

Add a new LlamaCpp model with the provided model name on the web UI.

-

-

-

Adding your own RAG pipeline

Custom Reasoning Pipeline

- Check the default pipeline implementation in here. You can make quick adjustment to how the default QA pipeline work.

- Add new

.pyimplementation inlibs/ktem/ktem/reasoning/and later include it inflowssettingsto enable it on the UI.

Custom Indexing Pipeline

- Check sample implementation in

libs/ktem/ktem/index/file/graph

(more instruction WIP).

Star History

Contribution

Since our project is actively being developed, we greatly value your feedback and contributions. Please see our Contributing Guide to get started. Thank you to all our contributors!

No reviews found!

No comments found for this product. Be the first to comment!